|

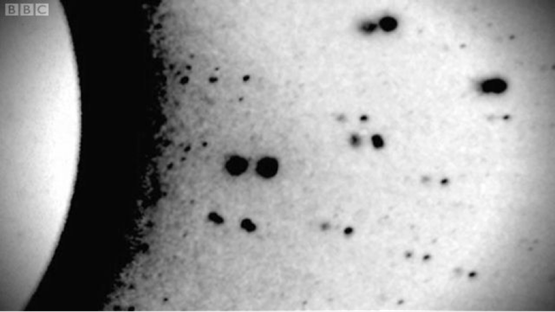

NOTE* subscript appears as _ and superscript as ^. Click HERE to read in PDF format with normal sub/super script The US social democratic journal Jacobin recently published an article by Ben Burgis that was a half hearted defence of Marx’s theory of exploitation. I say half hearted because, as a prelude to their article, they effectively repudiated the theory that Marx used to demonstrate exploitation: the labour theory of value. They write: Like every other area of empirical inquiry, though, economics has changed a lot since Capital was published in 1867. Today, most economists — including many who are committed Marxists — reject the labor theory of value (LTV). In this they are being far too generous to economics. Is contemporary economics really an area of empirical enquiry? I think not. It is better to see it as primarily a field of ideological combat. It is a field in which different political and class interests fight to defend their economic interests. As such one has to ask why most economists reject the labour theory of value? Did empirical evidence come to light since 1867 which invalidated Marx, or was it all a matter of politics? I would contend that it was the evident political threat of the socialist movement that motivated the rejection of the labour theory of value. If the labour theory of value is accepted, then Marx’s critique of capitalist exploitation becomes unavoidable. It would have been politically intolerable were colleges in capitalist countries to have continued to teach the labour theory of value after the working classes won the right to vote. Instead a new doctrine, ‘economics’ instead of ‘political economy’ had to be developed and introduced into the teaching curriculum. The new ‘marginalist’ economics, pioneered by Jevons and Marshall in the English speaking world, appeared very scientific. Jevons explicitly called upon static mechanics as his model, making extensive use of differential calculus in his maths[1]. But this was the form of science without the substance. In actual empirical sciences, like physics, the replacement of one theory by another depends on the new theory being able to make either more accurate empirical predictions than the previous one, or on an ability to predict hithertoo unobserved phenomena. The replacement of Newton’s gravitational theory by General Relativity (1915) rested on correct new predictions. Einstein’s theory predicted gravitational lensing: that massive bodies would deflect the path of photons. This was confirmed in 1919 when, during a solar eclipse the apparent position of stars near the sun was shifted as Einstein had predicted. Science calls such an observation a crucial experiment, one which tests the crux of a theory. Photo from the 1919 solar eclipse. The photo is a superimposition of the negatives taken during the eclipse with one taken of the same section of sky on a night earlier in the year. Each star shows as a pair of dark spots. The outer spot is from the eclipse image, the inner one is from the earlier night. The apparent shift of the stars away from the Sun arose from the bending of starlight by the Sun’s mass. Jevons published his theory of prices in 1871. But there was no crucial experiment undertaken to validate it. There was nothing like the 1919 solar eclipse observation. No systematic experimental observations comparing the predictions of Jevons with those of Ricardo and Marx. Instead the new theory gained acceptance by its ability to mimic the form of science. Neoclassical price theory, like gravitational theory, takes a mathematical form. Many, many scientific theories are expressed in maths. But expressing something in maths, as a set of equations is not enough to make it scientific. To be scientific it must be testable. If the theory contains formulae then the parameters in the formula must either be pre-specified mathematical constants like π or must be derivable from observation: for example c standing for the speed of light. Theories of this sort lend themselves to experimental verification. A theory which has what are termed free variables, that is to say parameters that can not be tied down by observation even indirect observation, is untestable. Marginalist price theory is untestable for this very reason. Consider the classic supply and demand diagram taught in economics classes: Here we have the quantity sold (q) and the price (p) at which it will be sold predicted as the intersection of two functions S(q), D(q). It all looks very scientific[2], except that the textbooks do not give explicit formulae for S(q) or D(q). They do not even tell us what functional form the S and D take. Are they parabolic functions? Hyperbolic ones? Exponential ones? If a physicist said that the orbit of stars around a galaxy was determined by the paths along which two functions were equal, but if they then neglected to give any formula for the functions then they would not be taken seriously. But since neoclassical price theory is not an empirical science, but instead a branch of bourgeois moral philosophy, issues of mathematical form or experimental testability are ignored. The professors are teaching this to naive teenagers. They impress them with visually concrete curves intersecting. They don’t bother giving any explicit formulae for the curves they plot. But suppose we take them at their word. Suppose we look at the standard text of US introductory economics and try to find out what formula the author was using for his curves. I have taken the curves, digitised points on them and determined that you cannot get an acceptable fit for anything less than a third degree polynomial. This means that the S(q) function must have the form S(q)=a+bq+ cq^2 + dq^3 Note that this has 4 parameters, a,b,c,d, that need to be tied down by observation to make a testable theory. Similarly the D(q) will also require at least another 4 free parameters to draw the curve that the textbook shows. The price theory is supposed to be explaining two numbers p,q but in order to do this it invokes 8 unknown free variables. The textbook does not tell students how to derive these variables. Suppose we take some of the industries in the Standard Industrial Classification. And we ask how to derive the S and D functions for these industries, there is no answer in the textbooks. Why? Because the theory has been so defined as to make it systematically impossible to empirically parameterise. At any given time period you can only observe two numbers for each industry - tons of Grain Mill Products sold and the total price they were sold for. There is no way to work back to the free variables since, and this is the key gotcha, any change in price or quantity is explained by Samuelson as being the result of either S,D or both having changed. So it is in principle impossible to use observations to fix the free variables, because any change in price or quantity is attributed to the supply and demand functions having altered. We are left with a theory that is even in principle untestable. In contrast to this the Labour theory of value specifies relations between empirical observables: λ_i the number of person years of effort used up - directly and indirectly to produce the output of the ith industry in the industrial classification V_i the monetary value of output of the ith industry in the industrial classification The theory states that these are linearly related so that V_i=[(Mλ)]_i Where there is only one free variable M which Marxist economists term the Monetary Equivalent of Labour Time. This parameter can be fixed by looking at the total monetary value of output in an economy versus the total labour used. Every parameter is tied to observables as should be the case in a scientific theory. Because of this it is empirically verifiable, and it has indeed been empirically verified. There are multiple studies by Marxist economists verifying it. To take just one paper, Cottrell and Cockshott found that for 49 US industries in the Standard Industrial Classification, the correlation between λ and V was 98.3%. That is a very strong correlation indeed. It implies that the labour content of industrial output explains more than 98% of the variation in monetary values of those industries. Science requires replicable results. The LTV produces them. Very similar correlations have been obtained for Germany for Sweden and for 12 other capitalist economies. The work of Jevons, Marshall, Samuelson etc. on value theory does not stand up to even the most elementary scientific scrutiny. By the standards of rigour taught in the hard sciences their maths and curves would be a joke, falling somewhere below the standard of astrology. But since this nonsense is taught in all seriousness to young students in US universities it has a lasting effect even on those among them who wish to rebel against the existing order. That a socialist like Burgis still takes it seriously, is testimony to what brilliant exponents of the conjurors art Jevons and his followers were. Notes [1] It is worth reading Mirowski, More Heat than Light, for a history of the physics envy of the marginalist economists. [2] It is presumably this textbook formulation that Burgis is referring to in his article when he says “As economist and Jacobin contributing editor Mike Beggs notes, economists today think in terms of supply and demand schedules rather than supply and demand as forces operating on commodities — which makes Marx’s argument that something must account for prices when these forces are in balance much less compelling.” AuthorPaul Cockshott is an economist and computer scientist. His best known books on economics are Towards a New Socialism, and How The World Works. In computing he has worked on cellular automata machines, database machines, video encoding and 3D TV. In economics he works on Marxist value theory and the theory of socialist economy. Archives June 2022

0 Comments

Leave a Reply. |

Details

Archives

July 2024

Categories

All

|

RSS Feed

RSS Feed